|

T A B L E O F C O N T E N T S

N O V E M B E R / D E C E M B E R 2 0 1 6

Volume 22, Number 11/12

DOI: 10.1045/november2016-contents

ISSN: 1082-9873

E D I T O R I A L

Holiday D-Lib

Editorial by Laurence Lannom, Corporation for National Research Initiatives

A R T I C L E S

Assessing Stewardship Maturity of the Global Historical Climatology Network-Monthly (GHCN-M) Dataset: Use Case Study and Lessons Learned

Article by Ge Peng, Cooperative Institute for Climate and Satellites-North Carolina, North Carolina State University and NOAA's National Centers for Environmental Information; Jay Lawrimore, Christina Lief, Richard Baldwin, Nancy Ritchey, Danny Brinegar and Stephen A. Del Greco, NOAA's National Centers for Environmental Information; Valerie Toner, STG, Inc. and NOAA's National Centers for Environmental Information

Abstract: Assessing stewardship maturity — the current state of how datasets are documented, preserved, stewarded, and made accessible publicly — is a critical step towards meeting U.S. federal regulations, organizational requirements, and user needs. The scientific data stewardship maturity matrix (DSMM), developed in partnership with NOAA's National Centers of Environmental Information (NCEI) and the Cooperative Institute for Climate and Satellites-North Carolina (CICS-NC), provides a consistent framework for assessing stewardship maturity of individual Earth Science datasets and capturing justifications for transparency. The consolidated stewardship maturity information will allow users and decision-makers to make informed use decisions based on their unique data needs. This DSMM was applied to a widely utilized monthly-land-surface-temperature dataset derived from the Global Historical Climatology Network (GHCN-M). This paper describes the stewardship maturity ratings of GHCN-M version 3 and provides actionable recommendations for improving the maturity of the dataset. The results from the use case study show that an application of DSMM like this one is useful to people who produce or care for digital environmental datasets. Assessments can identify the strengths and weaknesses of an individual dataset or organization's preservation and stewardship practices, including how information about the dataset is integrated into different systems.

Intake of Digital Content: Survey Results From the Field

Article by Jody L. DeRidder and Alissa Matheny Helms, University of Alabama Libraries

Abstract: The authors developed and administered a survey to collect information on how cultural heritage institutions are currently managing the incoming flood of digital materials. The focus of the survey was the selection of tools, workflows, policies, and recommendations from identification and selection of content through processing and providing access. Results are compared with similar surveys, and a comprehensive overview of the current state of research in the field is provided, with links to helpful resources. It appears that processes, workflows, and policies are still very much in development across multiple institutions, and the development of best practices for intake and management is still in its infancy. In order to build upon the guidance collected in the survey, the authors are seeking to engage the community in developing an evolving community resource of guidelines to assist professionals in the field in facing the challenges of intake and management of incoming digital content.

Technical Debt as an Indicator of Library Metadata Quality

Article by Kevin Clair, University of Denver

Abstract: Metadata production is a significant cost for any digital library program. As such, care should be taken to ensure that when metadata is created, it is done in such a way that its quality will not be low enough to be a liability to current and future library applications. Agile software development uses the concept of "technical debt" to place metrics on the ongoing costs associated with low-quality code, and determine resource allocation for strategies to improve it. This paper applies the technical debt metaphor in the context of metadata management, identifying common themes across the qualitative and quantitative literature related to technical debt, and connecting it to similar themes in the literature on metadata quality assessment. It concludes with areas of future research in the area of technical debt and metadata management, and ways in which the metaphor may be integrated into other current avenues of metadata research.

A Doomsday Scenario: Exporting CONTENTdm Records to XTF

Article by Andrew Bullen, Illinois State Library

Abstract: Due to the challenging state budget situation in Illinois, Andrew Bullen of the Illinois State Library was asked to explore how the existing CONTENTdm collections could be migrated to another platform should the State Library be unable to pay its bill for its existing services. Andrew chose XTF, a resource at hand and a logical candidate for undertaking the transfer, and methods that were already available that would allow rapid migration. He describes in detail the process of transferring three collections that represented a reasonable cross section of Illinois Digital Archives content from CONTENTdm to XTF, and offers an evaluation of his test migration approach and lessons learned.

N E W S & E V E N T S

In Brief: Short Items of Current Awareness

In the News: Recent Press Releases and Announcements

Clips & Pointers: Documents, Deadlines, Calls for Participation

Meetings, Conferences, Workshops: Calendar of Activities Associated with Digital Libraries Research and Technologies

|

|

F E A T U R E D D I G I T A L

C O L L E C T I O N

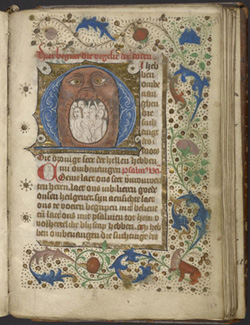

Historiated initial, Mouth of Hell, Initial M (Book of Hours, University of Pennsylvania Libraries, Ms. Codex 738, fol. 127r, in the public domain.)

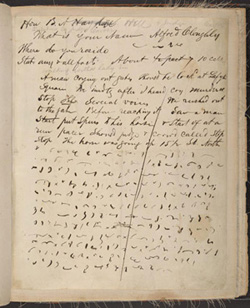

Cpl. James Tanner's long- and shorthand transcription of testimony from eyewitnesses of Lincoln's assassination, taken in the house where Lincoln lay dying. (Abraham Lincoln Foundation of The Union League of Philadelphia, document XI.2.Lincolniana, fol. 4r, in the public domain.)

OPenn, created by the Schoenberg Institute for Manuscript Studies at the University of Pennsylvania Libraries, makes digitized cultural heritage material freely available and accessible to the public. All images and metadata on OPenn can be freely studied, applied, copied, or modified by anyone, for any purpose. The data set for each digitized item consists of publication-quality TIFF files, web and thumbnail JPEG images, and TEI XML descriptions, which are all available under various Creative Commons licenses. In fact, much of the material is released into the public domain. These licensing structures permit users to have unmediated access to any data we provide, from a single image to the entire data set. As such, OPenn is a major part of Penn Libraries' initiative to embrace open data.

Data on OPenn can be browsed by humans through the collections pages, but the directory structure of the data on OPenn makes it easy to use computers to access the data and download it in bulk using simple tools. The Technical ReadMe provides information on how the site is structured and detailed instructions and recipes for downloading files from the site.

Digitized medieval and Renaissance manuscripts make up the majority of the 47 TB of data available on OPenn right now. OPenn launched May 1, 2015 with the entire corpus of manuscripts donated to the Penn Libraries by Lawrence J. Schoenberg and his wife Barbara Brizdle Schoenberg. The Schoenberg Collection features manuscripts from all over the world, with a focus on science, technology, engineering and mathematics. More datasets, including manuscripts from the University of Pennsylvania's Rare Book and Manuscript Library holdings and items from other institutions, soon followed. Historic diaries from a variety of institutions belonging to the Philadelphia Area Consortium of Special Collections Libraries (PACSCL) are also available on OPenn. Many of these documents are unknown while others are celebrated, such as the Union League's Tanner manuscript: a firsthand account of the events surrounding the assassination of Abraham Lincoln.

Recently OPenn took over the hosting of The Digital Walters, a data set of digitized manuscripts and TEI manuscript descriptions from the Walters Art Museum manuscript collection. OPenn also now hosts the data sets for the Archimedes palimpsest and the Galen Syriac palimpsest. While OPenn is mainly a portal for accessing data, it is designed so that interfaces can be built that interact with the data or display it in a more searchable or user-friendly way. For example, the Walters Art Museum just launched a new interface, Walters Ex Libris, which draws the data from OPenn but allows users to do faceted searches of Walters' manuscripts and view the codices in a page-turning application.

Most of the content on OPenn from the University of Pennsylvania and the recently added data from the Walters Art Museum were generated with funding from digitization and access grants from the National Endowment for the Humanities. OPenn continues to grow as more items from the University of Pennsylvania and other institutions are identified and added. OPenn is and continues to be a place to host data from projects that support open data. By 2019, over 450 manuscripts will be added to OPenn thanks to a CLIR grant to digitize all medieval and Renaissance manuscripts from the Philadelphia area from 15 different institutions.

D - L I B E D I T O R I A L S T A F F

Laurence Lannom, Editor-in-Chief

Allison Powell, Associate Editor

Catherine Rey, Managing Editor

Bonita Wilson, Contributing Editor

|